In today’s digital landscape, data is being generated at an unprecedented rate. From social media interactions and e-commerce transactions to IoT devices and enterprise systems, organizations are dealing with massive volumes of data every second. Managing this large-scale data efficiently is no longer optional—it is essential for business success.

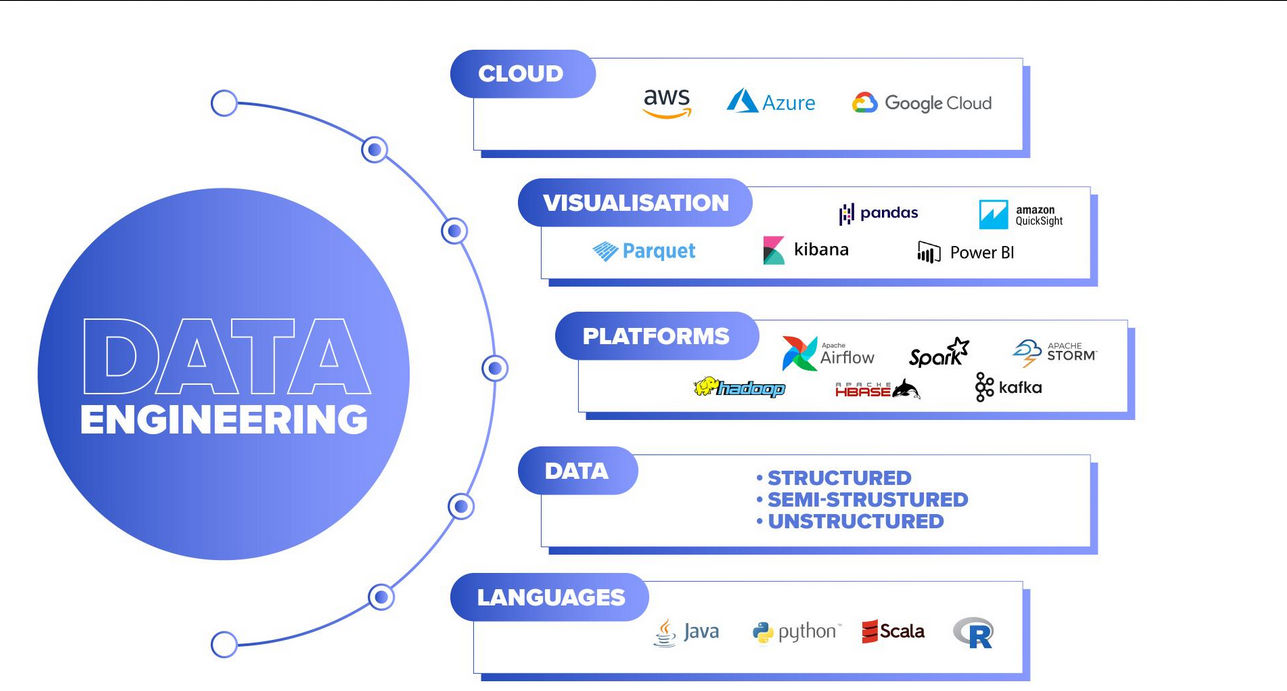

This is where data engineering plays a crucial role. Data engineering provides the tools, frameworks, and infrastructure needed to collect, process, store, and analyze massive datasets effectively.

In this comprehensive guide, we will explore how to manage large-scale data using data engineering, including best practices, tools, strategies, and real-world applications.

Understanding Large-Scale Data

Large-scale data, often referred to as big data, is characterized by the famous “3 Vs”:

- Volume: Massive amounts of data (terabytes to petabytes)

- Velocity: High speed of data generation and processing

- Variety: Different types of data (structured, semi-structured, unstructured)

Managing such data requires advanced systems that can handle complexity and scale.

What Is Data Engineering in Big Data Management?

Data engineering involves building systems that transform raw, unstructured data into clean, structured formats suitable for analysis.

In large-scale environments, data engineering focuses on:

- Designing scalable data pipelines

- Handling distributed data processing

- Ensuring data reliability and consistency

- Supporting real-time and batch processing

Without data engineering, large-scale data would be chaotic and unusable.

Key Challenges in Managing Large-Scale Data

Before diving into solutions, it’s important to understand the challenges:

1. Data Volume Explosion

Organizations generate enormous amounts of data daily, making storage and processing difficult.

2. Data Integration

Combining data from multiple sources can be complex and time-consuming.

3. Data Quality Issues

Inconsistent, duplicate, or missing data can lead to inaccurate insights.

4. Scalability

Systems must scale as data grows without performance degradation.

5. Real-Time Processing Needs

Businesses increasingly require instant insights from streaming data.

Core Principles of Managing Large-Scale Data

To effectively manage large-scale data, data engineers follow several key principles:

1. Build Scalable Architectures

Scalability is the foundation of big data systems. Use distributed systems that can handle increasing workloads by adding more resources.

Examples:

- Distributed computing frameworks

- Cloud-based infrastructure

2. Use Data Pipelines

Data pipelines automate the flow of data from source to destination.

A typical pipeline includes:

- Data ingestion

- Data transformation

- Data storage

Automated pipelines reduce manual work and improve efficiency.

3. Ensure Data Quality

High-quality data is essential for accurate analysis. Implement:

- Data validation rules

- Deduplication processes

- Error handling mechanisms

4. Adopt Batch and Real-Time Processing

Depending on business needs, you can use:

- Batch processing for large datasets processed periodically

- Real-time processing for instant insights

A hybrid approach is often the best solution.

5. Optimize Storage Solutions

Choosing the right storage system is critical. Options include:

- Data lakes for raw data

- Data warehouses for structured data

- Hybrid lakehouse architectures

Data Architecture for Large-Scale Data

A well-designed data architecture is essential for managing big data.

1. Data Lake Architecture

Data lakes store raw data in its original format.

Benefits:

- Flexible storage

- Supports all data types

- Cost-effective

2. Data Warehouse Architecture

Data warehouses store structured data optimized for analysis.

Benefits:

- Fast query performance

- Reliable reporting

- Structured schema

3. Lakehouse Architecture

Lakehouse combines the best of both worlds:

- Flexibility of data lakes

- Performance of data warehouses

This is becoming the preferred architecture in modern systems.

Essential Tools for Managing Large-Scale Data

Data engineers rely on various tools to manage big data effectively.

1. Data Processing Tools

- Apache Spark (distributed processing)

- Apache Flink (real-time processing)

- Hadoop (batch processing)

2. Data Storage Tools

- Amazon S3

- Google Cloud Storage

- Azure Data Lake

3. Data Integration Tools

- Airbyte

- Fivetran

- Talend

4. Workflow Orchestration Tools

- Apache Airflow

- Prefect

- Dagster

5. Streaming Tools

- Apache Kafka

- Apache Pulsar

These tools help automate and scale data operations.

Best Practices for Managing Large-Scale Data

To ensure success, follow these best practices:

1. Automate Everything

Automation reduces manual errors and improves efficiency. Use orchestration tools to automate workflows.

2. Monitor Data Pipelines

Implement monitoring systems to track:

- Pipeline performance

- Data quality

- System errors

3. Implement Data Governance

Data governance ensures:

- Data security

- Compliance with regulations

- Proper data usage

4. Use Cloud Infrastructure

Cloud platforms offer:

- Scalability

- Flexibility

- Cost efficiency

They eliminate the need for managing physical hardware.

5. Optimize Performance

Improve system performance by:

- Partitioning data

- Indexing databases

- Caching frequently accessed data

Real-World Use Cases

1. E-Commerce Platforms

Large e-commerce companies manage:

- Millions of transactions

- Customer behavior data

- Inventory systems

Data engineering helps optimize pricing, recommendations, and logistics.

2. Financial Services

Banks and fintech companies use data engineering for:

- Fraud detection

- Risk analysis

- Real-time transaction monitoring

3. Healthcare Systems

Healthcare organizations manage:

- Patient records

- Medical imaging data

- Research datasets

Efficient data engineering improves patient care and research outcomes.

4. Social Media Platforms

Social media companies process:

- Billions of user interactions

- Real-time content streams

Data engineering enables personalized feeds and targeted advertising.

Step-by-Step Guide to Managing Large-Scale Data

If you’re starting from scratch, follow this roadmap:

Step 1: Define Your Data Strategy

Identify:

- Data sources

- Business goals

- Key metrics

Step 2: Choose the Right Architecture

Decide between:

- Data lake

- Data warehouse

- Lakehouse

Step 3: Build Data Pipelines

Create pipelines for:

- Data ingestion

- Transformation

- Storage

Step 4: Implement Processing Systems

Use tools like Spark or Flink for data processing.

Step 5: Ensure Data Quality

Set up validation and cleaning processes.

Step 6: Monitor and Optimize

Continuously improve performance and reliability.

Future Trends in Large-Scale Data Management

The future of data engineering is evolving rapidly.

1. AI-Powered Data Pipelines

Automation using AI will reduce manual effort and improve efficiency.

2. Real-Time Data Everywhere

More businesses will adopt real-time analytics.

3. Data Observability

Monitoring tools will become more advanced, providing deeper insights into data pipelines.

4. Serverless Data Engineering

Serverless platforms will simplify infrastructure management.

Why Data Engineering Is Critical for Business Growth

Managing large-scale data effectively provides several benefits:

- Better decision-making

- Improved operational efficiency

- Enhanced customer experiences

- Competitive advantage

- Support for AI and machine learning

Organizations that leverage data engineering can unlock the full potential of their data.

Conclusion

Managing large-scale data is one of the biggest challenges—and opportunities—facing modern businesses. Data engineering provides the foundation needed to handle massive datasets efficiently, ensuring that data is accessible, reliable, and ready for analysis.

By implementing scalable architectures, automated pipelines, and robust data governance practices, organizations can transform raw data into valuable insights that drive growth and innovation.

As data continues to grow in volume and importance, mastering data engineering will be essential for any business looking to succeed in the digital age.